Picture it, team meetings in person with internal Abertay University team members and virtual discussions with Horizon EU partners. Notes in shared documents and people brainstorming ideas, the excitement was building for the first tangible indication of progress: personas and scenarios that inspire a human-centred blueprint for research discovery and collaboration platform.

This was my very human start to work with LUMEN last year. I enjoyed getting to know people over coffee, in person and virtually, finding my place in the project and in the team. In the background, purpose-built Python scripts, developed by the team here at Abertay, were helping me lead the creation of user personas and scenarios. LLMs supported the analysis of data gathered in interviews that I conducted personally, capturing deep insights into the perspectives of researchers across Europe. Importantly, there was a mix of interested participants from PhD students to professors, and a real blend of warmth and collaboration in our approach to generating LUMEN’s user insights: people first, AI in support.

I prioritised the human voice by interviewing researchers across four domains: Molecular Dynamics, Earth System Sciences, Mathematics, and the Social Sciences and Humanities. These conversations gave us insights into: what researchers do, where they get stuck, and what “good” looks like in their discovery journeys. The insights shared during our interviews and co-design workshops are guiding the design of the user experience and user interface of the LUMEN platform.

To create this shared picture, we used LLM-assisted thematic analysis. While the model drafted codes and grouped themes, we set the framework, wrote the prompts, and reviewed every output, refining or rejecting as needed. In short, AI sped us up, but people stayed in the driver’s seat. I consider myself a good driver!

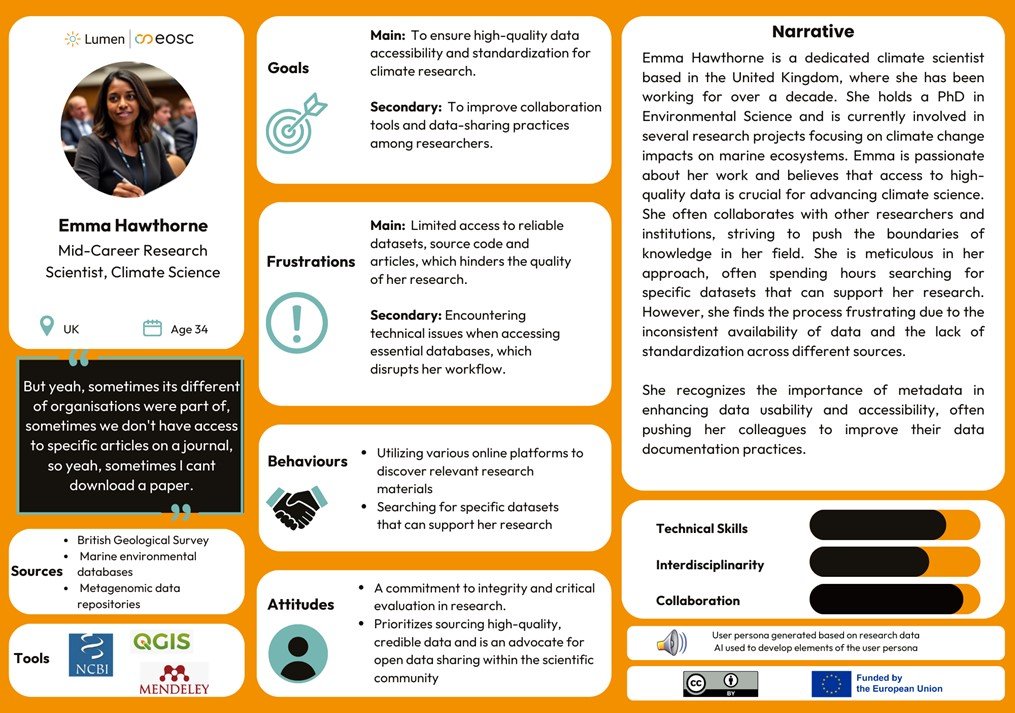

This rhythm (human strategise/AI compose) allowed us to analyse the data quickly without losing valuable nuance. From that analysis, we created 11 personas with 11 matching scenarios, distilling them into 19 user‑facing functionalities that now guide design decisions. I thought I would share a few favourites from the team:

- A LUMEN ecosystem chatbot (AIDA) that gives just‑in‑time answers, grounded in a curated knowledge base, with adaptable personality styles.

- Meta‑search and discovery that spans disciplines with meaningful filters and visual cues.

- FAIR‑friendly tools that support discovery practice without the admin

Crucially, we didn’t just use AI to generate and send. We validated personas with our partners via an internal questionnaire, evaluating for reality and relevance, usefulness, and design value, and then iterated. That’s how details like role framing and goals were fine-tuned to feel real and actionable. Two things made this process feel right:

Firstly, the traceability of the pipeline. Because it was systematic, we could follow a clean thread from a functionality (e.g., the chatbot) back through design ideas and scenario steps, all the way to the interview discussions that inspired it. It’s a living audit trail that keeps user voices present in every design decision. Secondly, the responsible rails we adopted provided accountability and integrity. All processing sat within our university’s secure Azure environment, with GDPR‑aligned governance and a simple principle: the model does not retain or train on our data. We stay accountable for the findings. And yes, we talked about bias and hallucination. Our safeguard was straightforward: start from real interviews, use AI to accelerate recognised qualitative steps, and keep humans-in-the-loop for judgment calls. We documented where the model helped, where we intervened, and why. What followed was the fun part: creative, explorative, co‑design workshops and testing to shape these functionalities into interfaces people will genuinely enjoy using. This means humans collaborate to prioritise together (hello, MoSCoW) and keep that traceability thread intact as choices get more concrete.

Looking back, the biggest win wasn’t speed, though we gained that, it was shared clarity. AI helped us turn scattered insights into a common purpose; the team (and our users) decided where to go. That’s the kind of human‑centred, AI‑enabled research culture I want to keep nurturing: responsible, thoughtful, transparent, and built for the people it serves.

Want to learn more about the journey of developing the LUMEN personas?

Here you can see an example of a user persona based on research data, with AI used to develop elements of the user persona. Read more about this in our Deliverable.

This article was written by Diane Morrow, who is a Postdoctoral Research Fellow at Abertay University. The AU team ensures that the LUMEN tools and platforms are truly user-centred. Learn more about the AU team & their work within LUMEN here.